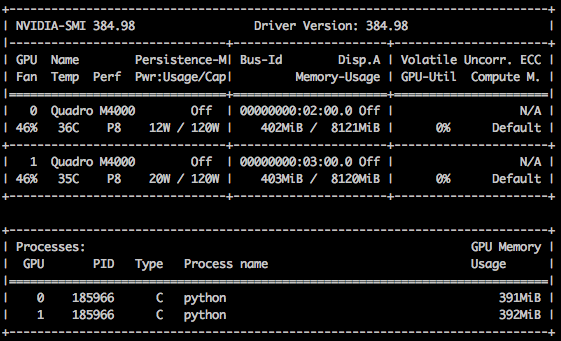

Multi-gpu compatibility: torch-summary for 'cuda:1' and so on by adithyavis · Pull Request #93 · sksq96/pytorch-summary · GitHub

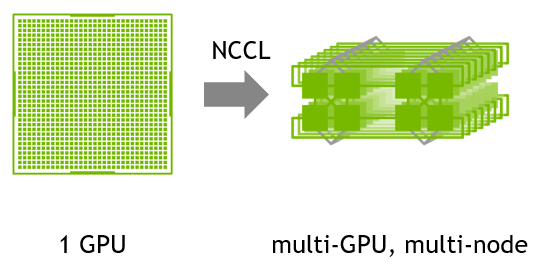

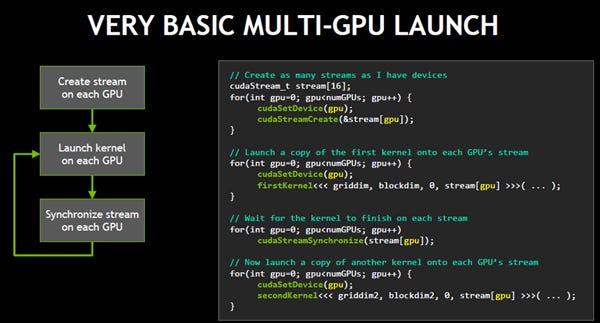

PDF) Effective multi-GPU communication using multiple CUDA streams and threads | Xing Cai - Academia.edu

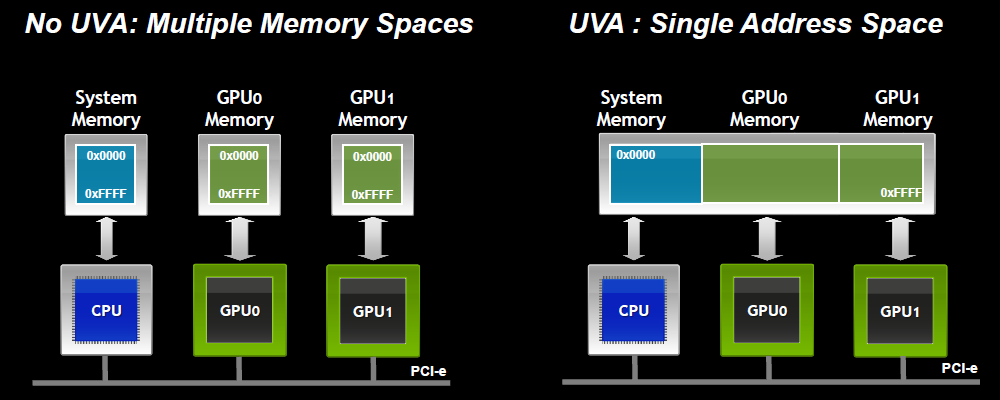

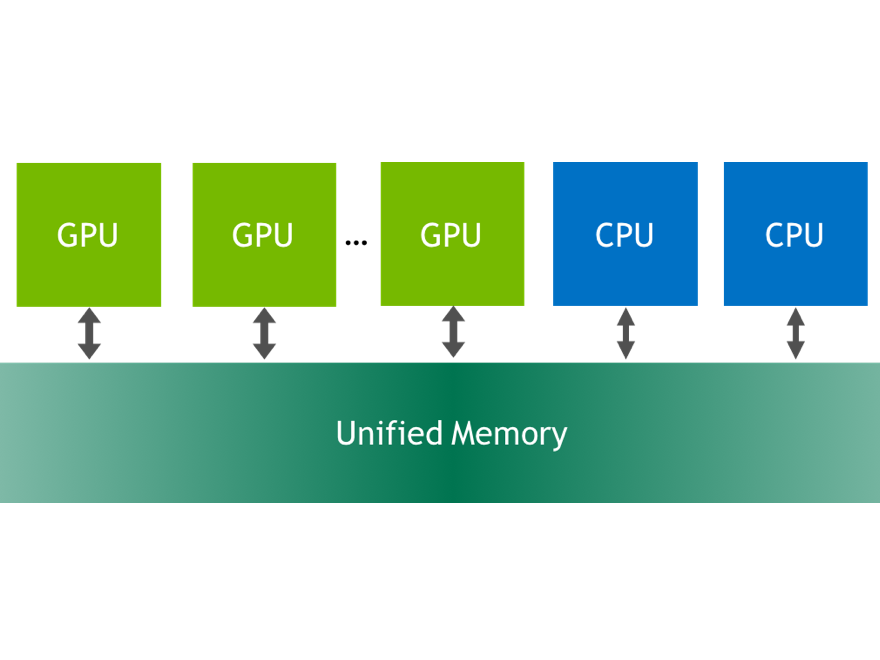

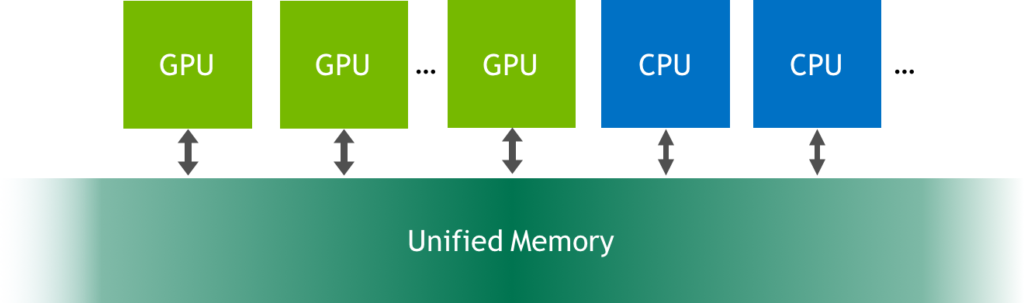

Inside NVIDIA's Unified Memory: Multi-GPU Limitations and the Need for a cudaMadvise API Call - TechEnablement

![PDF] Effective multi-GPU communication using multiple CUDA streams and threads | Semantic Scholar PDF] Effective multi-GPU communication using multiple CUDA streams and threads | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/77830356061996b6fe062759f3e61a3805b25d04/2-Figure2-1.png)